Zfs File System Windows

ZFS is supported on a variety of operating systems including Linux, which is free and can be installed on almost any computer. It is fairly trivial to move an existing ZFS pool to a different machine that supports ZFS. Windows Storage Spaces is available on Windows 8 (Home and Pro) and above and on Windows Server 2012 and above. ZFS is a truly next-generation file system that eliminates most, if not all of the shortcomings found in legacy file systems and hardware RAID devices. Once you go ZFS, you will never want to go back. ZFS is an open source File System originally developed by Sun Microsystems. Announced in September 2004, it has been implemented for the first time in the release 10 of Solaris (2006) under the CDDL License (Common Development and Distribution License).

Creating a ZFS pool

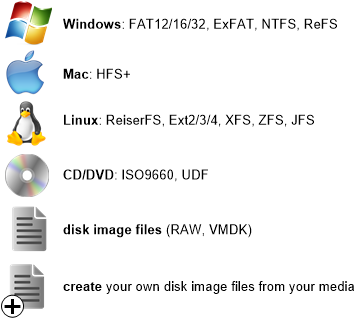

If you just want multi-/dual-boot OS (including Unix) with same /home, ZFS is the only realistic solution another maybe being NTFS, but has certain problems, such as maybe no to use Unix-like, rather than Windows-like, file-names so also utilities, and ZFS is safer. ZFS may be the only journalling fs Unix and GNU/Linux can both write to safely. This developer has much of Open ZFS running within Windows as a native kernel module but among the functionality currently missing is ZVOL support, the ability to compile ZFS on top of ZFS, and some remaining bugs as outlined on the aforelinked GitHub repository. Make ReFS available on all Windows 10 SKUs. Fix Storage Spaces performance problems. ZFS and btrfs don't have them. Add ZFS and btrfs features to Storage Spaces. Add ZFS support to Windows 10. Add btrfs support to Windows 10. There is a matching request in the Windows 10 subreddit. There is no OS level support for ZFS in Windows. As other posters have said, your best bet is to use a ZFS aware OS in a VM.

We can create a ZFS pool using different devices as:

a. using whole disks

b. using disk slices

c. using files

a. Using whole disks

I will not be using the OS disk (disk0).

Smadav 2017 rev 11.6.5 serial key. To destroy the pool :

b. Using disk slices

Now we will create a disk slice on disk c1t1d0 as c1t1d0s0 of size 512 MB.

c. Using files

We can also create a zpool with files. Make sure you give an absolute path while creating a zpool

Creating pools with Different RAID levels

Now we can create a zfs pool with different RAID levels:

1. Dynamic strip – Its a very basic pool which can be created with a single disk or a concatenation of disk. We have already seen zpool creation using a single disk in the example of creating zpool with disks. Lets see how we can create concatenated zfs pool.

This configuration does not provide any redundancy. Hence any disk failure will result in a data loss. Also note that once a disk is added in this fashion to a zfs pool may not be removed from the pool again. Only way to free the disk is to destroy entire pool. This happens due to the dynamic striping nature of the pool which uses both disk to store the data.

2. Mirrored pool

a. 2 way mirror

A mirrored pool provides you the redundancy which enables us to store multiple copies of data on different disks. Here you can also detach a disk from the pool as the data will be available on the another disks.

Zfs File System On Windows

b. 3 way mirror

2. RAID-Z pools

Now we can also have a pool similar to a RAID-5 configuration called as RAID-Z. RAID-Z are of 3 types raidz1 (single parity) and raidz2 (double parity) and rzidz3 (triple parity). Lets us see how we can configure each type.

Minimum disk requirements for each type

Minimum disks required for each type of RAID-Z

1. raidz1 – 2 disks

2. raidz2 – 3 disks

3. raidz3 – 4 disks

a. raidz1

b. raidz2

c. raidz3

Adding spare device to zpool

By adding a spare device to a zfs pool the failed disks is automatically replaced by the space device and administrator can replace the failed diks ata later point in time. We can aslo share the spare device among multiple zfs pools.

Make sure you turn on the autoreplace feature (zfs attribute) on the geekpool

Dry run on zpool creation

You can do a dry run and test the result of a pool creation before actually creating it.

Importing and exporting Pools

You may need to migrate the zfs pools between systems. ZFS makes this possible by exporting a pool from one system and importing it to another system.

a. Exporting a ZFS pool

To import a pool you must explicitly export a pool first from the source system. Exporting a pool, writes all the unwritten data to pool and remove all the information of the pool from the source system.

/lydia-10.html. In a case where you have some file systems mounted, you can force the export

Can Windows Read Zfs File System

b. Importing a ZFS pool

Now we can import the exported pool. To know which pools can be imported, run import command without any options.

As you can see in the output each pool has a unique ID, which comes handy when you have multiple pools with same names. In that case a pool can be imported using the pool ID.

Importing Pools with files

By default import command searches /dev/dsk for pool devices. So to see pools that are importable with files as their devices we can use :

Zfs File System For Windows

https://coldintensive.weebly.com/blog/free-download-cooking-dash-2016-full-version. Okay all said and done, Now we can import the pool we want :

Similar to export we can force a pool import

Creating a ZFS file system

The best part about zfs is that oracle(or should I say Sun) has kept the commands for it pretty easy to understand and remember. To create a file system fs1 in an existing zfs pool geekpool:

Now by default when you create a filesystem into a pool, it can take up all the space in the pool. So too limit the usage of file system we define reservation and quota. Let us consider an example to understand quota and reservation.

Suppose we assign quota = 500 MB and reservation = 200 MB to the file system fs1. We also create a new file system fs2 without any quota and reservation. So now for fs1 200 MB is reserved out of 1GB (pool size) , which no other file system can have it. It can also take upto 500 MB (quota) out of the pool , but if its is free. So fs2 has right to take up upto 800 MB (1000 MB – 200 MB) of pool space.

So if you don’t want the space of a file system to be taken up by other file system define reservation for it.

One more thing, reservation can’t be greater than quota if it is already defined. On ther other hand when you do a zfs list , you would be able to see the available space for the file system equal to the quota defined for it (if space not occupied by other file systems) and not the reservation as expected.

To set servation and quota on fs1 as stated above:

To set mount point for the file system

By default a mount point (/poolname/fs_name) will be created for the file system if you don’t specify. In our case it was /geekpool/fs1. Also you do not have to have an entry of the mount point in /etc/vfstab as it is stored internally in the metadata of zfs pool and mounted automatically when system boots up. If you want to change the mount point :

Other important attributes

You may also change some other important attributes like compression, sharenfs etc. Also we can specify attributes while creating the file system itself.